Military-Grade Encryption.

Zero Configuration.

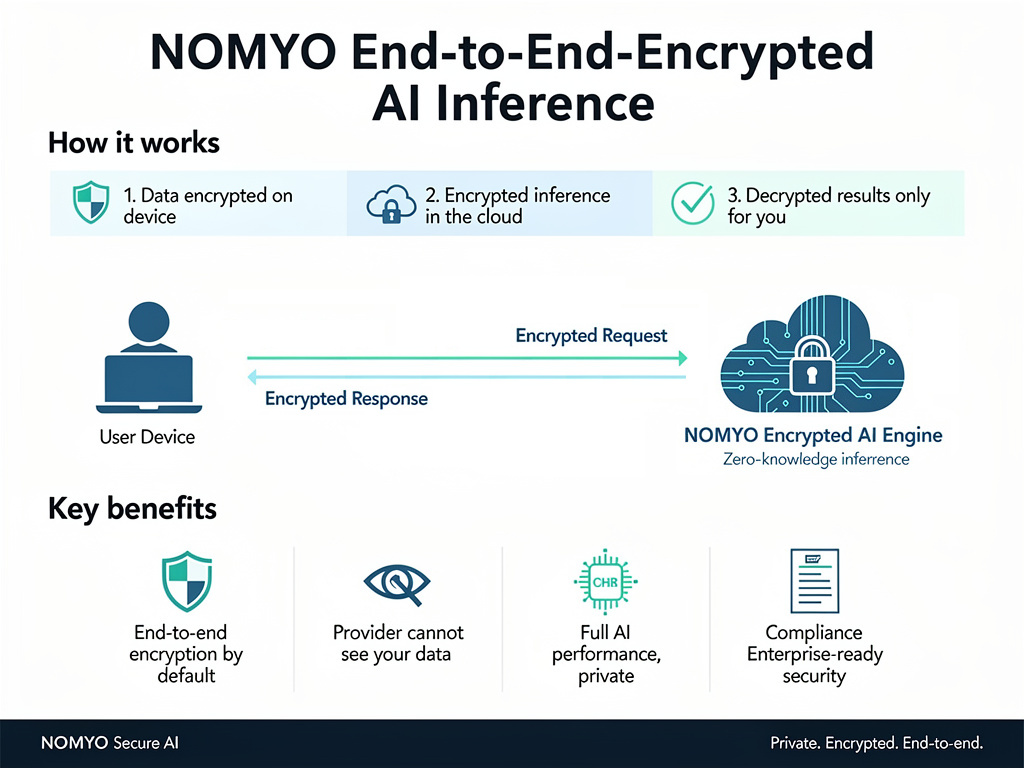

NOMYO uses a hybrid encryption scheme that combines the speed of AES-256-GCM for payload encryption with RSA-OAEP (4096-bit) for secure key exchange. The result is a system that is both performant and provably secure.

Per-request key rotation

Every inference gets a unique AES-256 key. Keys are generated via secrets.token_bytes and zeroed after use.

RSA-OAEP key exchange

4096-bit RSA keys establish the secure channel. Server public key fingerprint verification prevents MITM attacks.

Memory protection

Plaintext payloads are protected from swap to disk and memory dumps. All crypto material is zeroed immediately after encryption.

Hardware-rooted Attestation

TPM and SGX attestation verify the integrity of the inference environment. High and Maximum security tiers execute inside a Trusted Execution Environment (TEE), guaranteeing that plaintext never touches untrusted hardware.

Built for Compliance

HIPAA, GDPR Art. 32, PCI DSS v4.0, NIST 800-53, SOC 2, ISO 27001, FedRAMP, GLBA — the same property (plaintext never leaves your control) satisfies the core technical requirements across all major frameworks.